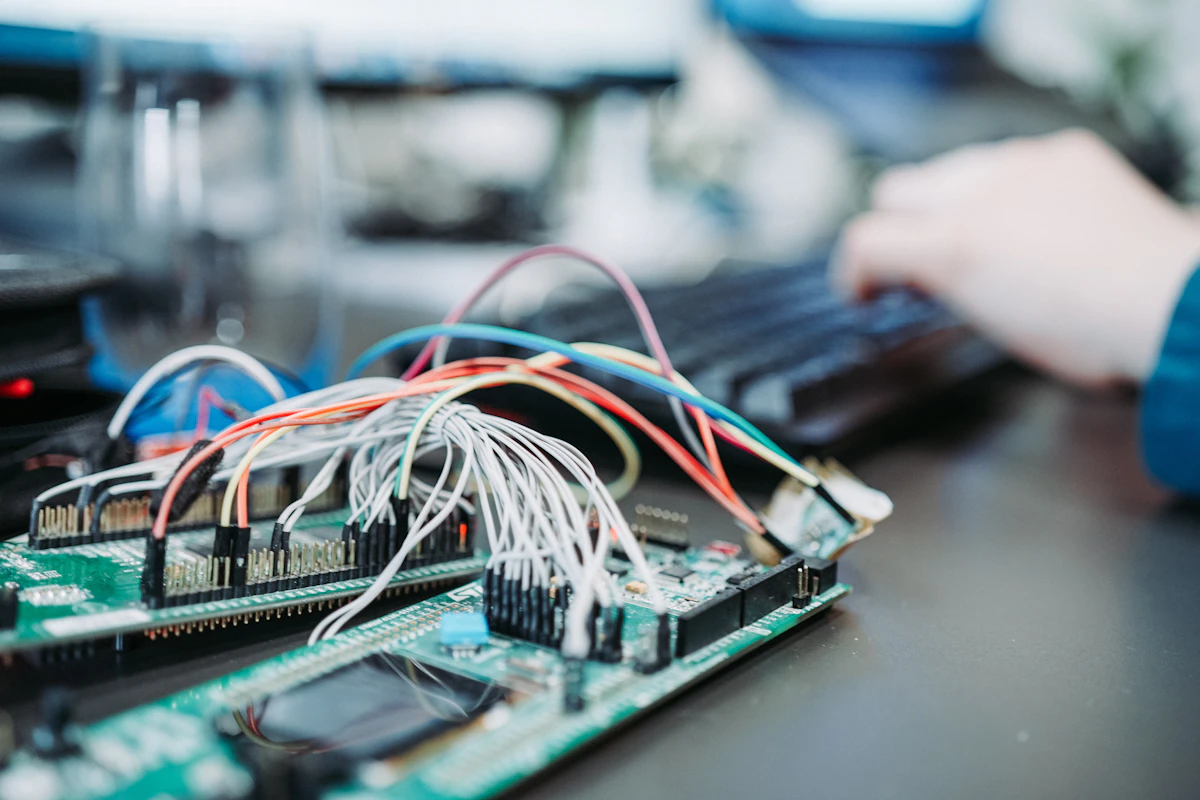

On-Premise AI Infrastructure

GPU hardware consulting, open-source model hosting, and on-prem AI deployment

On-premise AI infrastructure module that handles the full stack of self-hosted AI — from GPU hardware selection and rack provisioning to deploying open-source models like LLaMA, Mistral, Whisper, and YOLO, with performance benchmarking, resource monitoring, and security-first architecture for data-sovereign organizations.

Features

What's Included

GPU Hardware Consulting

Assess workload requirements and recommend optimal GPU configurations — NVIDIA A100, L40S, RTX 4090 — with cost-performance analysis and vendor-neutral sourcing guidance.

Open-Source Model Hosting

Deploy and manage open-source models (LLaMA, Mistral, Whisper, Stable Diffusion, YOLO) on your own hardware with quantization options for memory optimization.

Performance Benchmarking

Run standardized benchmarks on your hardware to measure tokens-per-second, inference latency, and batch throughput — with comparison reports across configurations.

Resource Monitoring Dashboard

Real-time monitoring of GPU utilization, VRAM usage, CPU load, and network throughput across all AI nodes with alerting on capacity thresholds.

Model Catalog Management

Internal model registry tracking deployed models, versions, hardware assignments, and access permissions — with one-click model swap and rollback.

Security & Network Isolation

Air-gapped deployment with no external API calls — all inference runs locally behind your firewall with encrypted model storage and audit logging.

Plans

Feature Comparison

See what's included at every level — each tier builds on the previous one.

| Feature | Basic | Advanced | Expert | Enterprise |

|---|---|---|---|---|

| Single GPU server setup and configuration | ||||

| One open-source model deployment | ||||

| Basic resource monitoring (CPU, GPU) | ||||

| Web-based management console | ||||

| Multi-GPU server with load balancing | — | |||

| Model catalog with version management | — | |||

| Performance benchmarking reports | — | |||

| Automated model updates and rollback | — | |||

| Multi-node GPU cluster orchestration | — | — | ||

| Quantization and optimization consulting | — | — | ||

| Advanced monitoring with alerting rules | — | — | ||

| API gateway for model inference routing | — | — | ||

| Air-gapped deployment (zero egress) | — | — | — | |

| Hardware procurement and rack setup | — | — | — | |

| 24/7 infrastructure support SLA | — | — | — | |

| Compliance documentation (ISO, SOC2) | — | — | — |

Basic

4 features- Single GPU server setup and configuration

- One open-source model deployment

- Basic resource monitoring (CPU, GPU)

- Web-based management console

- — Multi-GPU server with load balancing

- — Model catalog with version management

- — Performance benchmarking reports

- — Automated model updates and rollback

- — Multi-node GPU cluster orchestration

- — Quantization and optimization consulting

- — Advanced monitoring with alerting rules

- — API gateway for model inference routing

- — Air-gapped deployment (zero egress)

- — Hardware procurement and rack setup

- — 24/7 infrastructure support SLA

- — Compliance documentation (ISO, SOC2)

Advanced

8 features- Single GPU server setup and configuration

- One open-source model deployment

- Basic resource monitoring (CPU, GPU)

- Web-based management console

- Multi-GPU server with load balancing

- Model catalog with version management

- Performance benchmarking reports

- Automated model updates and rollback

- — Multi-node GPU cluster orchestration

- — Quantization and optimization consulting

- — Advanced monitoring with alerting rules

- — API gateway for model inference routing

- — Air-gapped deployment (zero egress)

- — Hardware procurement and rack setup

- — 24/7 infrastructure support SLA

- — Compliance documentation (ISO, SOC2)

Expert

12 features- Single GPU server setup and configuration

- One open-source model deployment

- Basic resource monitoring (CPU, GPU)

- Web-based management console

- Multi-GPU server with load balancing

- Model catalog with version management

- Performance benchmarking reports

- Automated model updates and rollback

- Multi-node GPU cluster orchestration

- Quantization and optimization consulting

- Advanced monitoring with alerting rules

- API gateway for model inference routing

- — Air-gapped deployment (zero egress)

- — Hardware procurement and rack setup

- — 24/7 infrastructure support SLA

- — Compliance documentation (ISO, SOC2)

Enterprise

16 features- Single GPU server setup and configuration

- One open-source model deployment

- Basic resource monitoring (CPU, GPU)

- Web-based management console

- Multi-GPU server with load balancing

- Model catalog with version management

- Performance benchmarking reports

- Automated model updates and rollback

- Multi-node GPU cluster orchestration

- Quantization and optimization consulting

- Advanced monitoring with alerting rules

- API gateway for model inference routing

- Air-gapped deployment (zero egress)

- Hardware procurement and rack setup

- 24/7 infrastructure support SLA

- Compliance documentation (ISO, SOC2)

Use Cases

Where This Module Fits

Data-sovereign AI deployment for government agencies

Healthcare on-prem AI processing for patient data privacy

Financial institution AI compliance with local data residency

Manufacturing edge AI for real-time quality inspection

Defense and classified workload AI infrastructure

Technology

Built With

Production-grade technologies trusted by enterprises worldwide.

Related Modules

Works Well With

AI Model Serving

Deploy, version, and serve machine learning models via scalable inference APIs

AI Object Detection

General-purpose computer vision with custom model training and video stream analysis

Speech-to-Text Engine

Real-time audio transcription with multi-language support and speaker diarization

Have a project in mind?

Let's discuss how we can build a custom solution tailored to your needs.

Get a Free Consultation